Problem

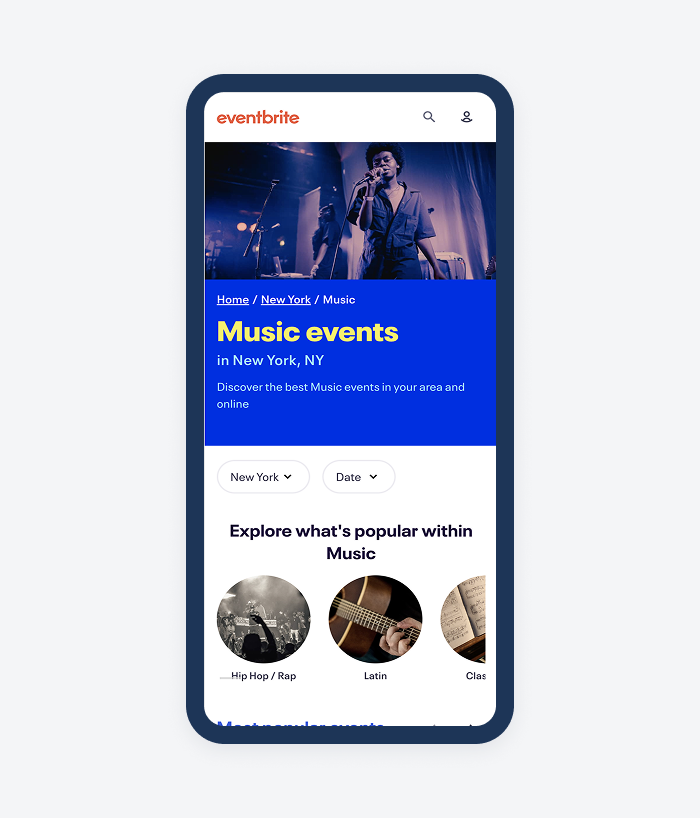

The main users of Eventbrite, Social Scouts, love to plan and use Eventbrite events to hang out with friends, meet new people, and experience new things. In their discovery process, they often look for personalized inspiration, not just the most popular event.

In our research and qualitative data, we learned that while Social Scouts value discovery, they struggle to express what they’re looking for using traditional search tools:

- Filtering and sorting seemed “rigid”, and didn’t always translate to what was intended.

- Users iterated through multiple searches, applied what filtering we had, changed location - there was clear intent to running multiple queries to finding an event match.

Users wanting to find more personalized events counted for almost half of all comments about Eventbrite’s discovery surfaces.

Defining the Experiment

Our search team regularly operated in the world of A/B test experiments. Our team strategized together in working sessions - tweaking ideas, hypotheses, and I would show proposed designs. Given our knowledge of Social Scouts and their search/browse behaviors, we felt comfortable in coming up with the following hypotheses for the project:

- IF we utilize LLMs and AI to power a more inspiring, flexible and conversational search experience THEN users will be better guided toward finding a great event BECAUSE they will have the ability to express their intent and increased confidence in finding the right event.

This would be our north star throughout the initiative.

Explorations

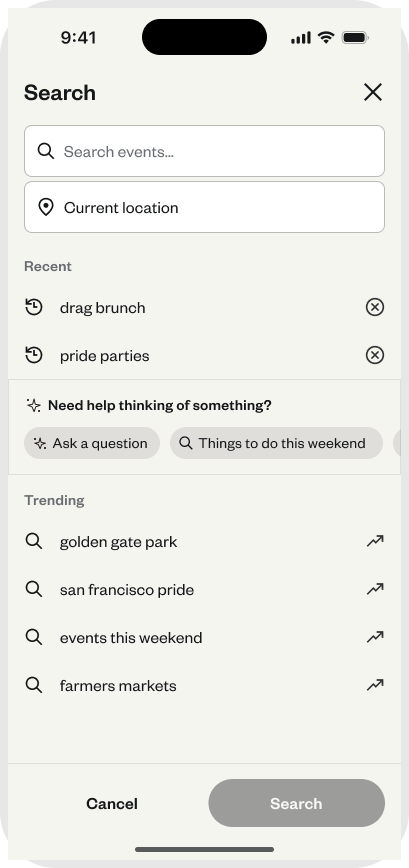

I needed to explore many things to get the team in position to test. The chat needed colors, an icon, an entry point, a comparative chat feature audit, and the chat design experience. I broke down each step from within Figma and got exploring.

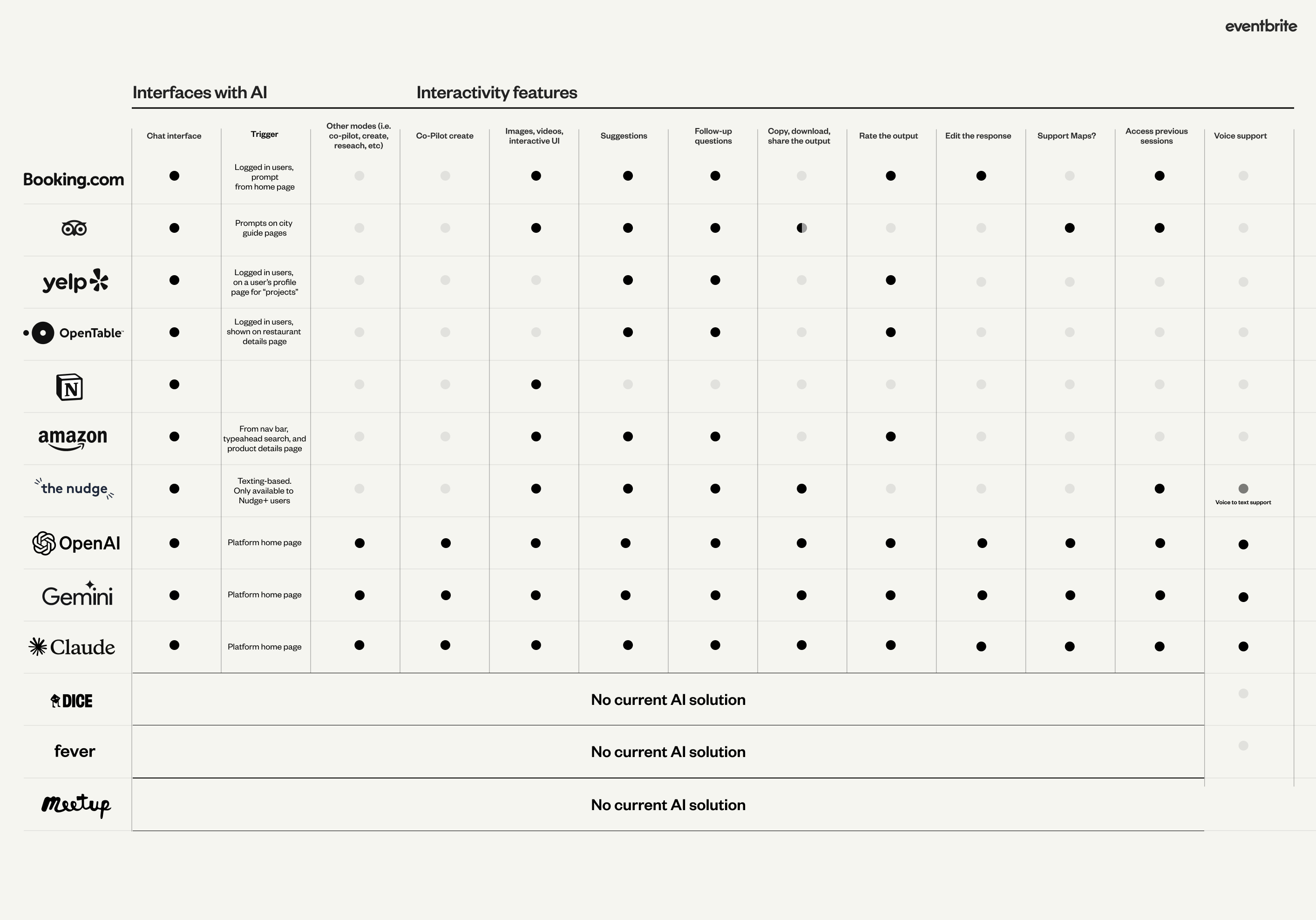

Chat feature audit

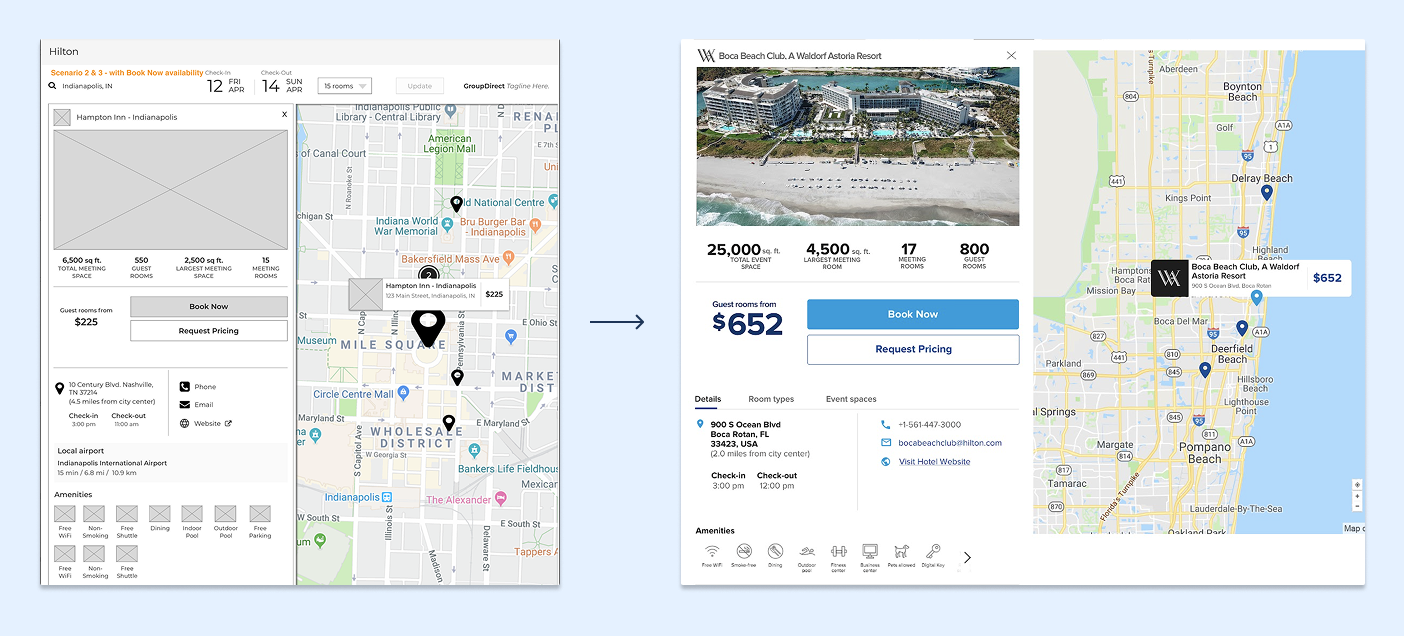

For the audit, I recorded the flow of comparable company AI/ML chats and mapped it back to common feature offerings. I also looked at best in-class experiences like OpenAI, Gemini, and Claude. I created the table above to take into product and development collaboration sessions to discuss how we could present our MVP that best met the expectations from a user and competitive standpoint. Here’s how this influenced my thinking:

- I was surprised by the lack of chat history. This was a flow I mocked up in my initial workflows, but something I thought we could bring to a later version.

- I was also surprised by the lack of AI/ML chat in our event-finding space. It made us intrigued that we were one of the first players here.

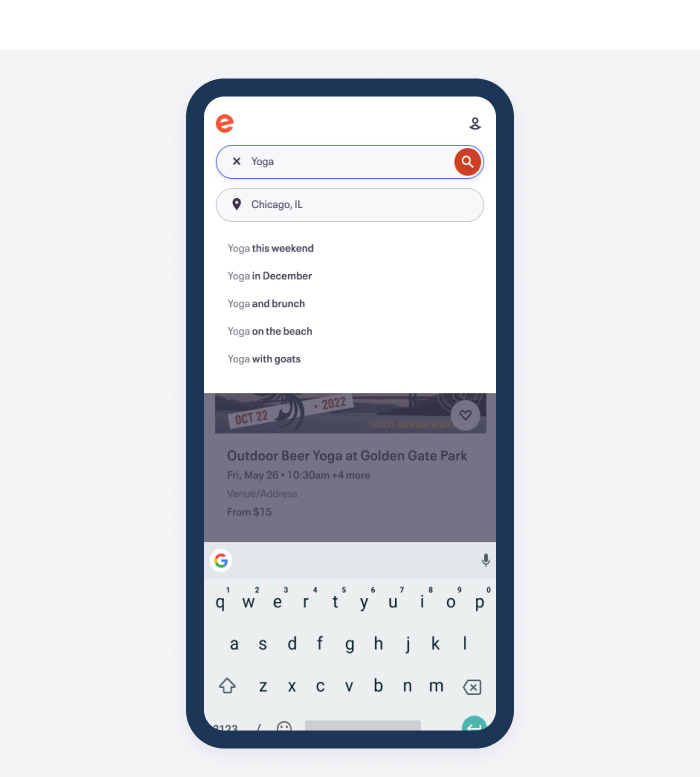

- Something I pushed hard for was suggestions support. This was something that the team thought it could punt on for a later version, but when I brought this audit to them, it was clear that we needed to be competitive with this particular feature.

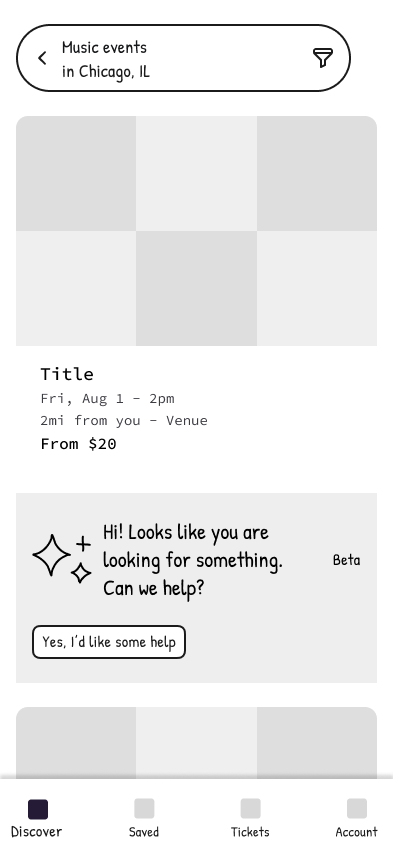

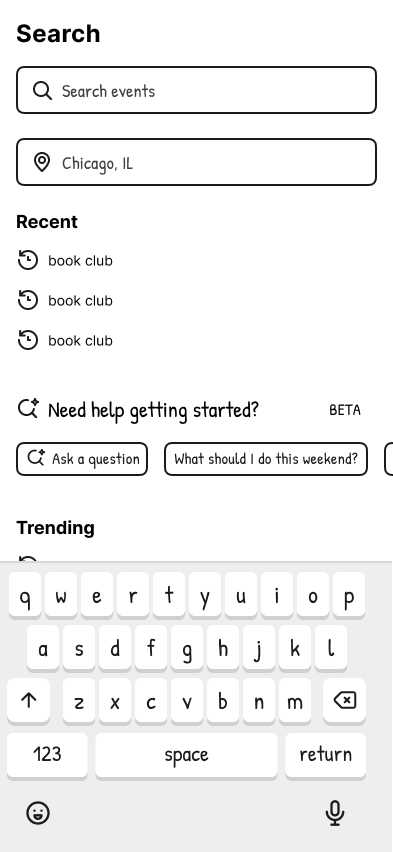

Next I explored different entry point and chat result options. I also put together some potential icon designs for the chat feature.

Wireframes

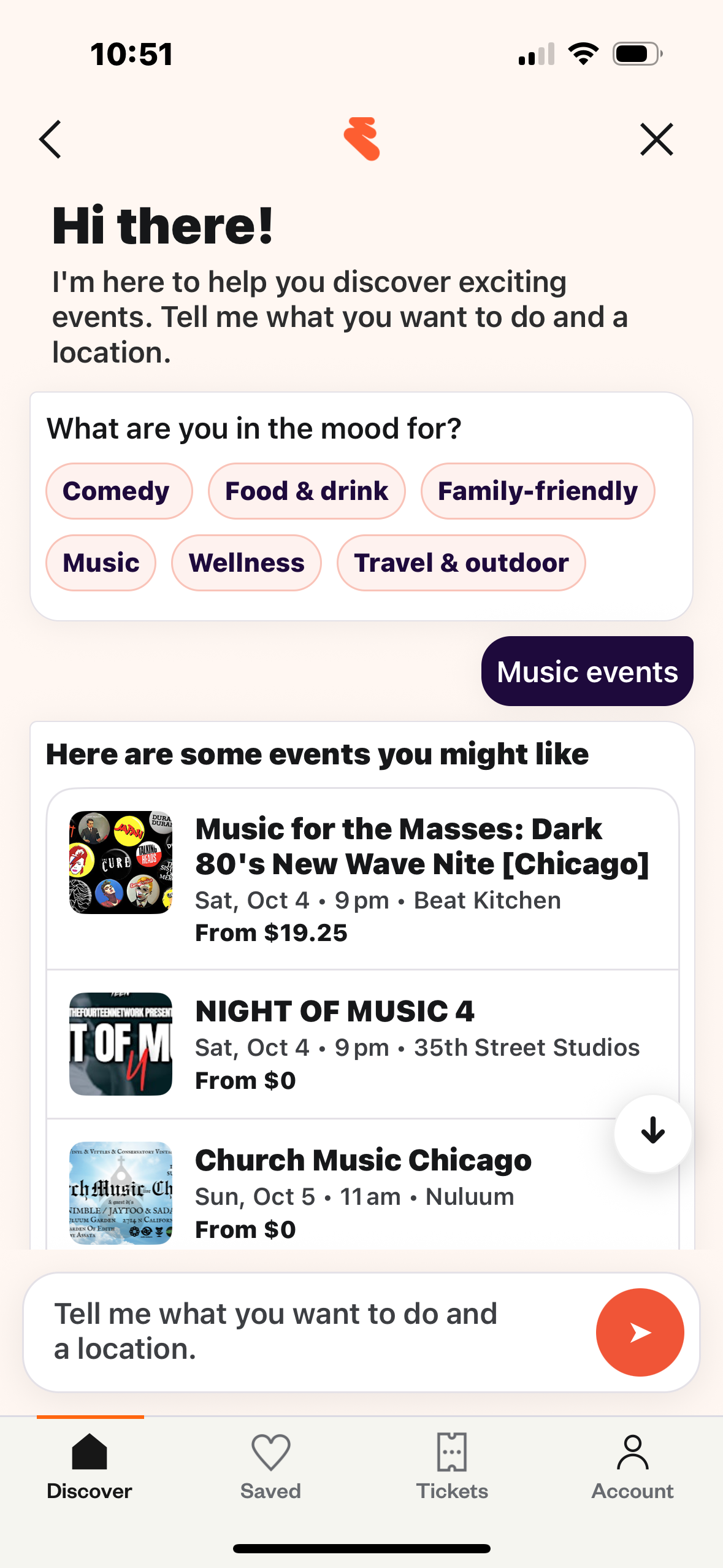

Research

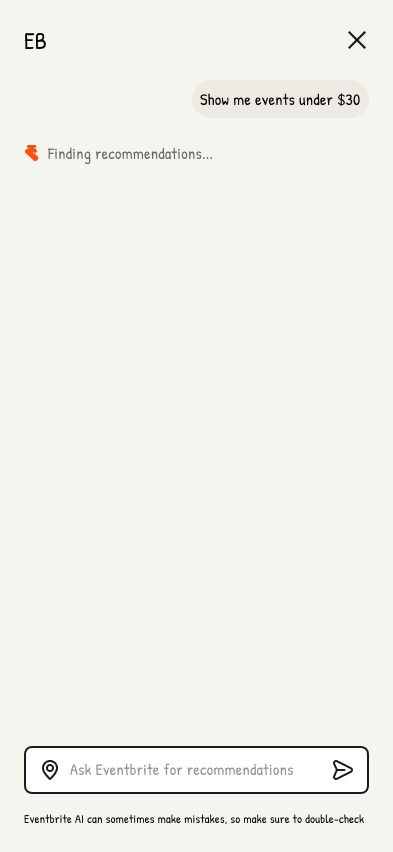

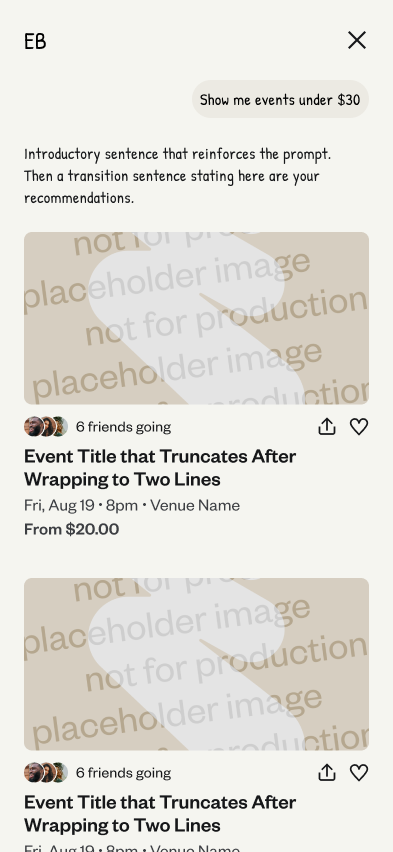

I worked with development to quickly prototype a version of our first experiment. I worked with Figma Make here to initially put together ideas to share with the team. A developer on the team took this initial prototype and created an environment a coded version that we could test with users (screenshot shown below). The main reason we wanted to test with this type of environment is we wanted the chat to feel as real as possible.

For the usability test we wanted to learn a couple main things. I collaborated with the PM of the team to craft these objectives:

- Learn about user sentiment and trust in using AI tools to find events.

- Learn about how a chatbot type experience would fall in the process of finding events.

After putting up the test on UserTesting.com, we received responses from 8 of our target users - people that are 20-35 years old, actively search for events and things to do, and are the ones planning events with a group. After synthesizing the results, here were our main takeaways.

- Users with higher intent prefer regular search.

- Users had high expectations when using the AI tool. They were turned off by results thatwere not relevant.

- Incorporate more trust into the events that are shown. Whether adding a AI summary of the event, or using badges to describe the event, we could do a better job showing more trust to users in the results.

With these takeaways in mind, we moved to our first experiment.

Here is the Figma Make prototype I took to developers. After showing this idea, development built their own prototype off this idea with actual event data, and this is what we took to usability testing. It was fun to see Figma Make generate cross-functional excitement and speed up iteration.

Experiment 1

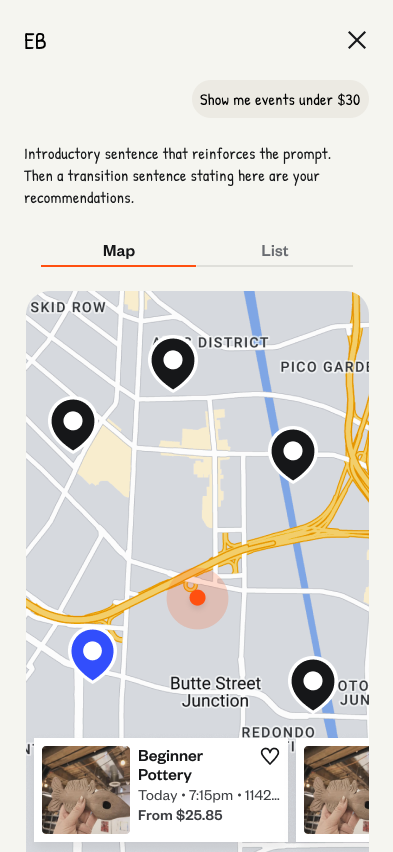

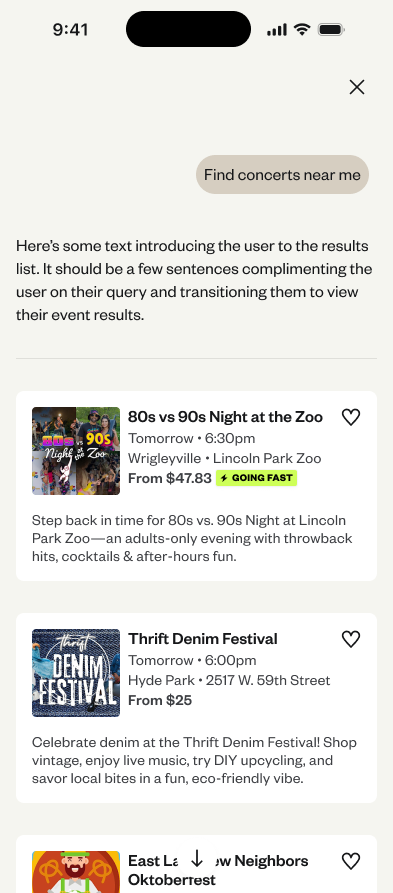

After collaborating with product, development, and engineering, we put together our first experiment experience. Our main metric was trying to move up paid tickets, and we were interested in learning more about how much activity would happen into the Natural Language Search model. Here is what we decided for the first test:

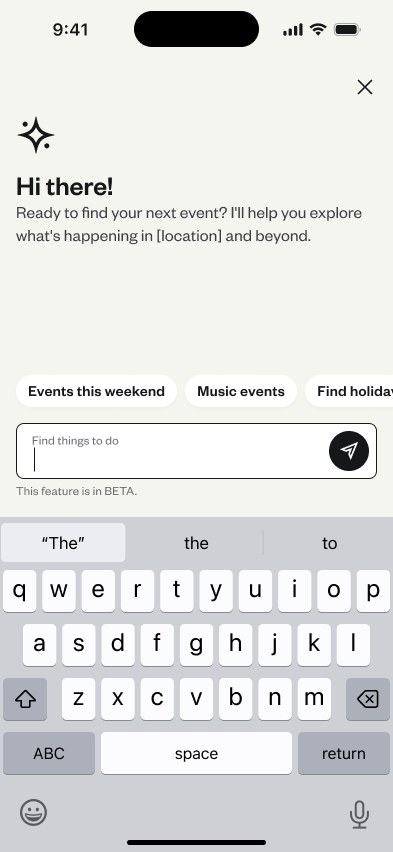

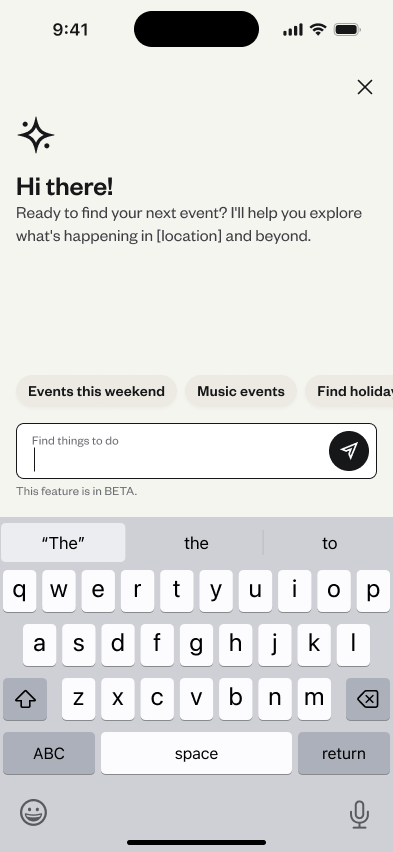

- Introduced an AI chat entry point within the search takeover experience screen

- Designed onboarding, empty states, and conversational UI scenarios

- Partnered with product and engineering to QA the designs and plan for LLM question and response scenarios.

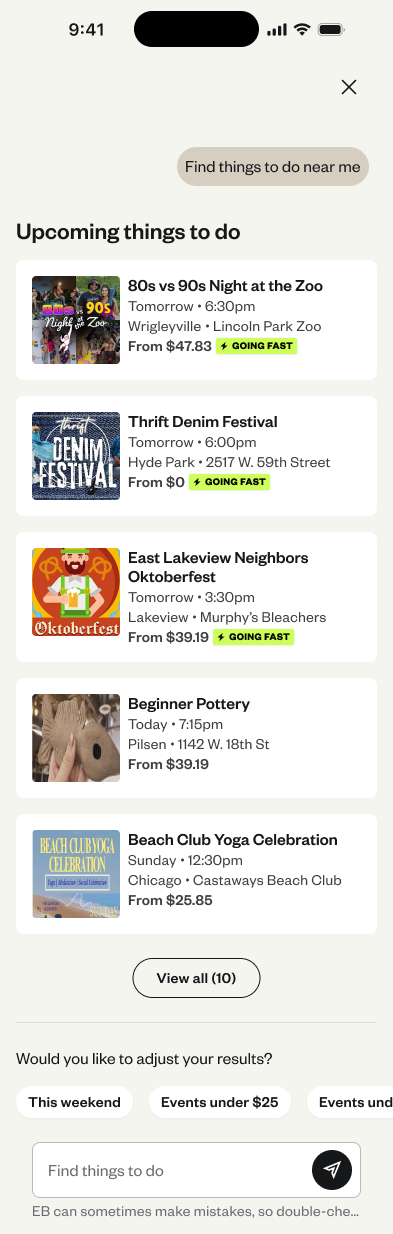

Results: Only ~1% of users interacted from the search page entry point. Other usage data that was also interesting:

- 37% of users that entered the chat selected an event listing page. That number from search is typically around 1-2%

- User queries were most likely going to be 3 words

- Users mostly entered queries that were time-based. Things like “What should I do this weekend?”

The clicks into the listing page raised eyebrows, especially with that number being so much larger than a typical search. We thought we should keep experimenting with the chat entry point, and continue improving the results that come back from the LLM.

Experiment 2

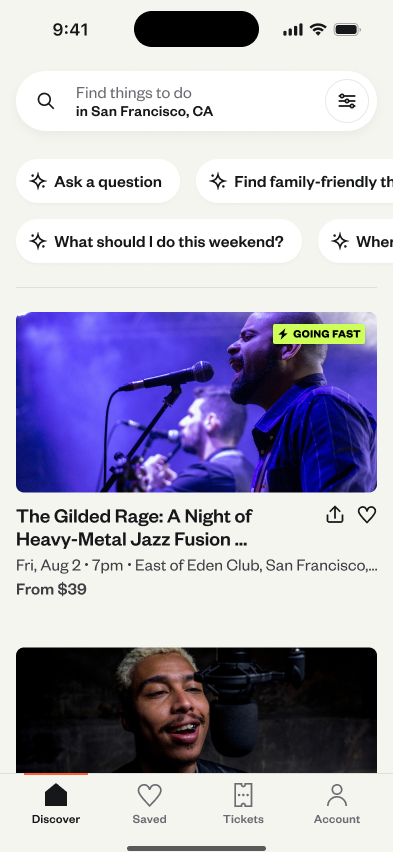

For experiment 2, we wanted to push the chat feature more up-front in the search and discovery process to see how much this would improve engagement. We were intrigued by the 37% click rate into events once they reached the chat page, and wanted to see if that remained true with the entry point being home page pills below search.

We also improved our infrastructure returns, keying in on queries that had to do with dates, categories, and time. These were the most popular queries based on our data from the first experiment.

After two weeks of experimentation, we achieved a win! Users that went through the AI Natural Language search flow converted at a higher-level than users who ran a search. We achieved a nearly 35,000 additional order increase, and $200,000 in additional yearly revenue.

Results:

- 33,348 additional paid ticket orders per year

- An additional $200,000 in paid ticket revenue per year